- AI Vibes

- Posts

- 🐺 Anthropic leaked its own source code twice in one week

🐺 Anthropic leaked its own source code twice in one week

Plus: Marc Andreessen says AI layoffs are a farce, Apple is handing Siri to Google, and 80% of Fortune 500 companies are running AI agents with no security plan

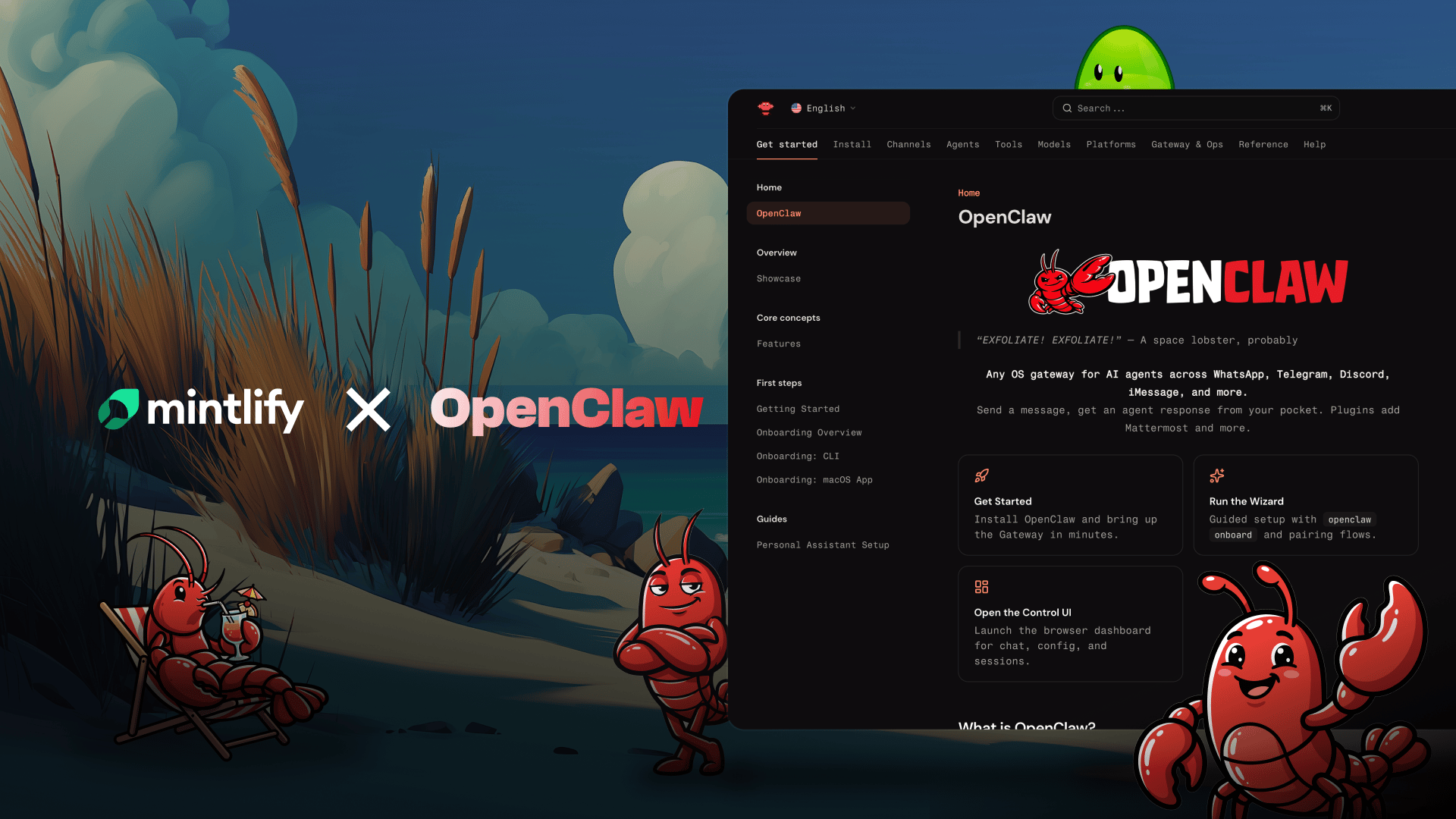

The fastest-growing repo on GitHub is a one person team!

OpenClaw went from 9K to 185K GitHub stars in 60 days — the fastest-growing repo in history.

Their docs? One person, plus Claude. They scaled to the top 1% of all Mintlify sites, shipping 24 documentation updates a day.

Hey there,

This week in AI: Anthropic accidentally revealed its most powerful unreleased model AND leaked 500,000 lines of source code for its coding tool — in back-to-back incidents. Oracle fired up to 30,000 employees via a 6 AM email to fund AI data centers. And Marc Andreessen went on a podcast and called the entire AI layoff wave a lie.

Also, Apple just confirmed it's replacing Siri's brain with Google Gemini. The company that built its brand on privacy is handing its assistant to the company that built its brand on your data.

Let's get into it.

📰 WHAT'S TRENDING

Anthropic Leaked Its Own Unreleased AI Model — Then Leaked Its Source Code 5 Days Later

On March 26, a security researcher found that a misconfigured content management system at Anthropic left nearly 3,000 internal files publicly accessible. Among them: a draft blog post describing "Claude Mythos," a new model tier called "Capybara" that sits above Opus. The post described it as "by far the most powerful AI model we've ever developed" and flagged "unprecedented cybersecurity risks." Five days later, on March 31, a debugging file accidentally bundled into a routine Claude Code update exposed 500,000 lines of source code across 1,900 files — including unreleased features like a persistent background agent and session review tools. Anthropic says no customer data was exposed. The company that sells itself as the safety-first AI lab just had the worst operational security week in the industry. 🔗 Read more

Oracle Fired 30,000 People at 6 AM to Pay for AI Data Centers

Oracle began executing layoffs on March 31, with employees receiving termination emails from "Oracle Leadership" at approximately 6 AM local time across the US, India, Canada, and Mexico. TD Cowen estimates between 20,000 and 30,000 employees are affected — roughly 18% of Oracle's global workforce. The cuts are funding a $156 billion AI infrastructure buildout tied to its deal with OpenAI, which requires 3 million GPUs over five years. US banks have pulled back from financing the expansion, doubling Oracle's borrowing costs. The company disclosed a $2.1 billion restructuring charge in its March 10-Q filing. Oracle posted $6 billion in quarterly income. The money is there. The jobs are not. 🔗 Read more

Marc Andreessen Says AI Layoffs Are a Farce — Companies Are Just Overstaffed

On the 20VC podcast, Marc Andreessen called AI the "silver-bullet excuse" for layoffs companies wanted to make anyway. His argument: essentially every large company is overstaffed by 25% to 75% due to pandemic-era hiring. The real drivers are rising interest rates and COVID overhiring, not artificial intelligence. OpenAI's Sam Altman has used the term "AI washing" to describe the same phenomenon — blaming routine cuts on AI to deflect scrutiny. But the counter-evidence is stacking up. Block explicitly attributed its 40% workforce reduction to AI capabilities. An Anthropic study from March showed AI can already theoretically perform the majority of tasks in engineering, law, finance, and business. Someone's right. The market will tell us who. 🔗 Read more

Apple Is Handing Siri's Brain to Google Gemini

Bloomberg reported that Apple's next-generation Siri, launching with iOS 27, will be powered by Google's Gemini models and cloud infrastructure. The company is also opening Siri up to rival AI assistants beyond its existing ChatGPT integration. Apple is building a standalone Siri app with a chatbot-like interface, on-screen awareness, and cross-app integration. Most processing stays on-device or in Apple's secure cloud. But the core intelligence running the assistant will come from Google — the same company Apple spent two decades differentiating itself from on privacy. 🔗 Read more

Anthropic Accidentally Took Down Thousands of GitHub Repos Trying to Clean Up Its Own Leak

After the Claude Code source code leak, Anthropic filed DMCA takedown requests to remove the code from GitHub. The automated process accidentally flagged and took down thousands of unrelated repositories. Anthropic called it an accident. Developers called it something else. The company that leaked its own code then broke other people's code trying to fix the problem. 🔗 Read more

A Congressman Is Now Investigating Anthropic's Security Practices

Representative Josh Gottheimer is pressing Anthropic on the back-to-back leaks. His letter demands answers about the company's internal security protocols, how the incidents occurred, and what steps Anthropic is taking to prevent future exposures. The timing is brutal — Anthropic is simultaneously fighting the Pentagon in court over its "supply chain risk" designation, arguing it should be trusted with sensitive government contracts. Two accidental data leaks in one week makes that argument harder to sell. 🔗 Read more

80% of Fortune 500 Companies Are Running AI Agents — With Minimal Security

Over 80% of Fortune 500 companies have deployed AI agents that perform tasks without direct human intervention. But security infrastructure hasn't kept pace. Companies are giving autonomous software access to databases, internal tools, and customer data without adequate guardrails. Separately, Goldman Sachs estimates hyperscaler AI spending will hit $527 billion in 2026. The gap between deployment speed and security readiness is the story of enterprise AI right now. 🔗 Read more

🧠 HERE'S THE THING

Anthropic had a historically bad week. And the irony is almost too on-the-nose.

This is the company that positions itself as the responsible AI lab. The one that told the Pentagon "no" when asked to remove safety restrictions. The one fighting a federal lawsuit to prove it can be trusted with sensitive technology. And in the span of five days, it accidentally published the existence of its most advanced unreleased model AND leaked the full source code of its flagship coding product.

Then it made things worse. The DMCA takedown requests meant to clean up the leak accidentally nuked thousands of innocent GitHub repositories. A congressman is now formally investigating.

Here's what matters for everyone watching: this is a credibility problem, not a technical one. The leaks didn't expose customer data. No passwords were compromised. But Anthropic's entire brand is built on the idea that it handles dangerous technology more carefully than anyone else. Two "human errors" in one week puts that claim on trial in the court of public opinion — right when the actual court case against the Pentagon needs that credibility most.

The lesson isn't about Anthropic specifically. It's about the gap between what AI companies promise and what they operationally deliver. Safety isn't a research paper. It's a packaging process that doesn't bundle debug files into production releases. It's a content management system that doesn't leave 3,000 internal documents publicly accessible.

Every AI company selling enterprise contracts should be auditing their own release pipelines this week. Because if the "safety-first" lab can't keep its own source code contained, the bar just got a lot higher for everyone.

💡 Smart Moves

Project Management That Doesn’t Suck

Your PM tool shouldn't need a PM. Workzone shows every project, every deadline, every bottleneck in one view. Your team tracks work. You see everything. Replace the guesswork. See the work.

⏳ CONVERSION CORNER

Anthropic's leaked source code revealed dozens of fully-built features that haven't shipped yet. A persistent background agent. Session review capabilities. Always-on assistant mode. These weren't prototypes — they were production-ready features behind feature flags.

The pattern: Every major AI company is sitting on capabilities they've already built but haven't released. The gap between what these tools CAN do and what they're currently allowed to do is widening. Companies that figure out how to use these tools at their current capability level — not last year's — gain an edge.

Now it's your move: If you're using Claude, GPT, or Gemini in your business, schedule a monthly "capability audit." Check changelogs. Test new features the week they drop. Most of your competitors are still running prompts they wrote six months ago on workflows designed around last year's limitations. The tool changed. Their process didn't. That's your opening.

💎 DATA GEM

$527 billion — That's the consensus Wall Street estimate for what hyperscaler companies will spend on AI infrastructure in 2026.

Oracle is firing 30,000 people to fund its share. Meta burned $200 billion in market cap over spending questions. The money flowing into AI infrastructure now exceeds the GDP of most countries. But here's the number that should keep you up at night: over 80% of Fortune 500 companies deploying AI agents have inadequate security in place. Half a trillion dollars in infrastructure. Minimal guardrails on what it's doing.

Want to reach more potential customers?

JX Creative builds automated GTM systems for B2B companies. If you want to get put in front of qualified buyers in your target market, and have the entire GTM process automated, reach out below.

What'd you think of today's content?Your experience matters—let us know how to improve! |

That's it for this week. Stay sharp out there.

Jake

P.S. If you know someone at an AI company who handles release engineering or DevOps, forward this. Anthropic's week just became the case study for why packaging processes matter as much as model safety research.

Reply